Why AI Crawler Access Matters

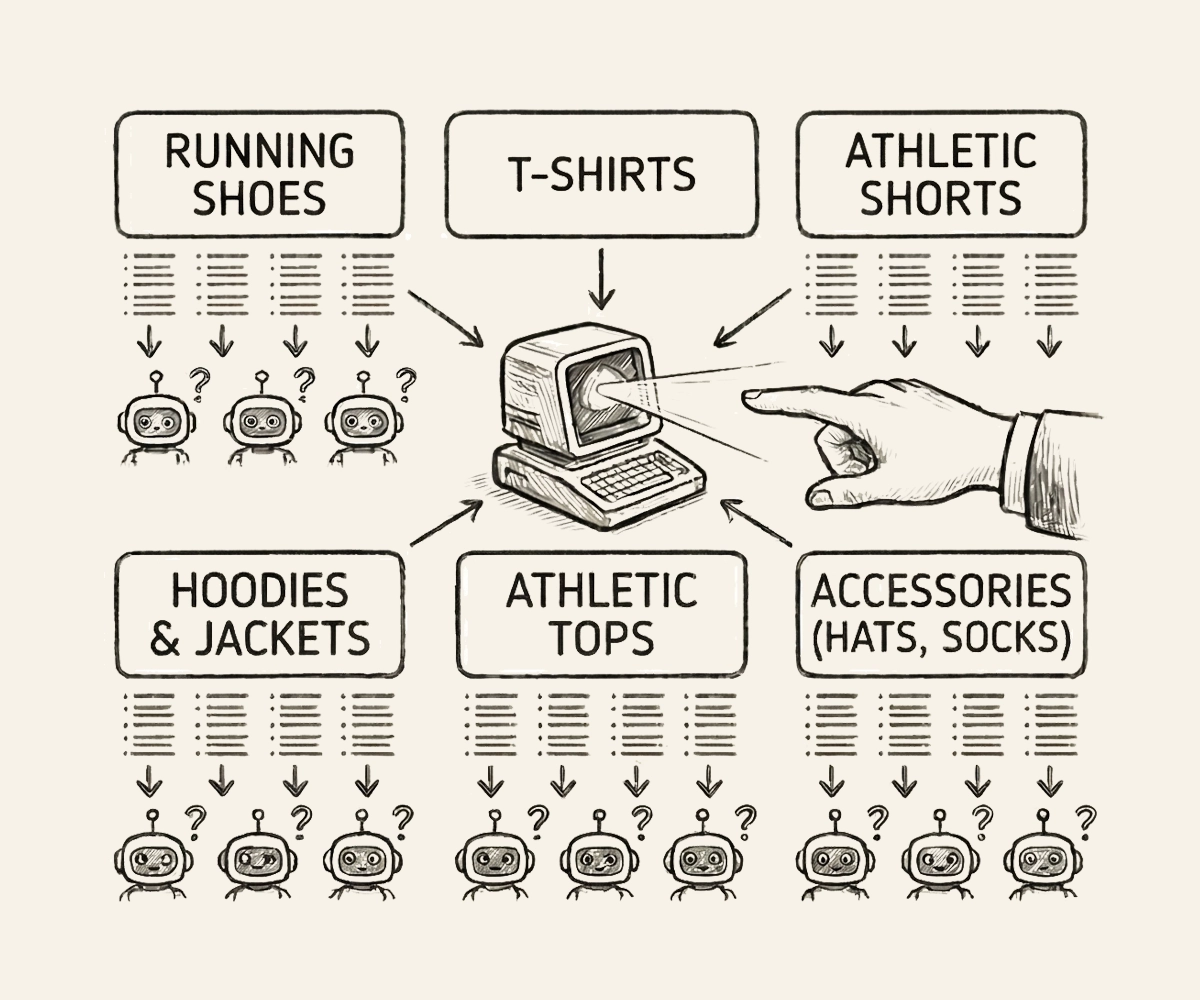

When a potential customer asks ChatGPT for a hotel on their vacation, or asks Gemini to recommend a running shoe, the AI model draws on two sources: knowledge it learned during training, and information it finds by searching the web in real time. Both of these mechanisms depend on AI crawlers being able to access your website.

Your website’s robots.txt file is the gatekeeper. It’s a small text file at the root of your domain that tells web crawlers (including AI-specific ones) what they’re allowed to access. Many websites use restrictive configurations, often put into place by IT teams to preserve server traffic. The result is that AI models are silently blocked from your content without anyone on your team knowing it.

The Two Types of AI Crawlers

Training crawlers like GPTBot and ClaudeBot index your website content so it becomes part of the model’s knowledge base. When they’re blocked, the AI model’s understanding of your brand stays frozen in time, or worse, relies entirely on third-party sources you don’t control, like review aggregators and forums. Centium measures both what AI can access, and what has been indexed to date in each model.

Live search crawlers like ChatGPT-User and PerplexityBot visit your website in the moment a user asks a question. These are the crawlers that generate real-time citations and drive actual referral traffic. Blocking them means AI will answer questions about your category without ever checking your site for the latest information.

What Happens When AI Can’t Access Your Site

If your competitors allow AI crawlers and you don’t, AI models will recommend them over you. Not because they’re better, but because they’re more visible. The AI has more information to work with, more recent data to cite, and more confidence in recommending brands it can actually verify. In a world where AI-assisted purchasing decisions are growing rapidly, robots.txt is no longer just a technical SEO concern. It’s a visibility strategy.